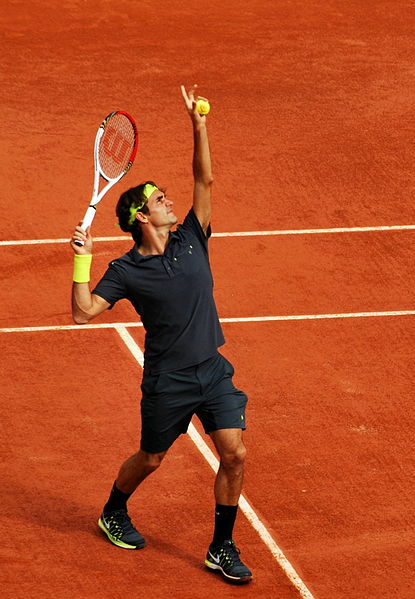

PIs frequently face the problem to decide whether to resubmit or to write a new application. Imagine a game of dice with the following rules: roll a regular dice, you win if you get a six; however, you never get to see the face of the dice when it stops rolling, someone else does. If the dice turned a four or a five, you are asked to toss the dice again. If you turned a four in the first toss, you always lose whatever the second roll of dice turns up. However, if you turned a five in the first toss, someone will toss a coin privately. If it lands on Head, you win regardless of the result of your second dice roll, if it lands on Tail, only rolling a six is a win. Would you roll the dice again, or would you pass on the opportunity and start afresh?

If you knew you had a five, you would resubmit because your chances of winning are much higher. This is often the case if you resubmit early and the review panel has not changed. But sometimes panel members change and the new members mostly view your resubmission as a new application (a new roll of the dice). And if you knew you had a four, no amount of rewriting effort would have helped and you would have submitted a new application.

So, how does one determine whether a four or a five turned up in the first roll of dice?

Reviewer feedback could guide you, but the most critical document to help you determine your chances is the summary in which the session chair captures your grant’s most important problems. So before you start addressing the comments of each reviewer in your resubmission, consider that summary first. Where is the real problem? You may have to read between the lines to find out.

Are you the problem? Investigator is one of the big five evaluation criteria (Innovation, Significance, Approach, INVESTIGATOR, Environment). Do you feel that most comments in all five criteria always point back to you, directly or indirectly: lack of confidence in your ability to manage a complex operation involving many participants and a large budget, lack of confidence in research expertise with no senior PI as collaborator or mentor, lack of confidence in your choice of collaborators (they are your friends – present more by courtesy than by necessity, or they are there by necessity but you have never worked with them in earlier projects or written papers together – so they present a risk). In that case, it may be better to rewrite your application with a better team.

Is significance the problem? To gain significance, you add specific aims which make your project complex given the high variety in methods used or the variety and cost of equipment involved. To gain significance, you rely on too many assumptions; for example: if this is available, and that comes to fruition, and if the other thing happens – the research would have large implications. To gain significance you rely on the most iffy specific aim, the one with no preliminary data or work. Significance is intimately linked to innovation. With little innovation, the significance is low because the next steps, i.e. the opportunities for further research your grant will open, are too limited. It may be better to rewrite a new application with more realistic and better supported aims, or with a greater focus on what happens next.

Is vagueness the problem? The comment “lack of detail” often hides a larger problem which could be remedied, not by more unneeded details, but by details which are more relevant to assess your expertise and project planning skill. “Lack of detail” may indeed reveal that your plan is not worked through. But it may also be that you assumed the reviewers knew much more than they actually did, which made you leave out the justification for the approach or methods. Pity, for it would have shown you understand their strengths and limitations in a technique-rich landscape. You have not deemed necessary to add expert details or references that show you know and care about the known pitfalls of methods, statistics, and data collection. The “I’m-aware-of-that” detail is exactly what the reviewer needs to assess your competency. Finally, vagueness of the research question will inevitably lead to “lack of detail” comments, so clarify and resubmit.

Is lukewarm support from the panel the problem? Again, Do not be fooled by the mildly supportive ho, hum comments intended more to prevent your neurotic breakdown than to compliment you. Your research question may simply not be exciting enough, or is too insignificant. It needs to be looked at again. Write a new application when you have a better idea with greater significance.

The recommendations above are based on episode 63 of “the effort report” podcast by two eminent PIs with extensive panel and submission experience: Elizabeth Matsui and Roger Peng

I thoroughly enjoyed reading it. Roald Hoffman is a renaissance man with an encyclopedic culture and many talents outside of chemistry. But this blog is about the differences between a grant application and a scientific paper. The link with chemistry will become apparent later.

I thoroughly enjoyed reading it. Roald Hoffman is a renaissance man with an encyclopedic culture and many talents outside of chemistry. But this blog is about the differences between a grant application and a scientific paper. The link with chemistry will become apparent later. \

\